Are You Ready for AI Workloads and Inferencing?

Artificial intelligence seems to have taken over the world as it becomes embedded in every technology. But the truth is that this is just the beginning. We are entering a new phase of the AI revolution that will exponentially increase AI workloads across the IT infrastructure. This blog explores the pending growth of AI workloads and poses the question: Are you ready?

Evolving from Training to Inferencing

High-performance compute is necessary for two different types of AI workloads: training and inferencing.

- IBM defines training as the process of “teaching” a machine learning model to optimize performance on a training dataset of sample tasks relevant to the model’s eventual use cases.1

- Oracle defines inference as the ability of AI, after much training on curated data sets, to reason and draw conclusions from data it hasn’t seen before.2

- “Think of it this way: if training is like teaching an AI a new skill, inference is that AI actually using the skill to do a job,” explains Google Cloud.3

One of the reasons why AI workloads are expected to increase significantly in 2026 and beyond is because many AI use cases that were in training mode or a pilot program are going into production. A 2026 Deloitte report confirms, “Today, 25% of respondents said their organization has moved 40% or more of their AI experiments into production to-date; however, 54% expect to reach that level in the next three-to-six months.”4 This means that while AI compute demand from training will still continue to increase, inferencing will also require considerable compute power.

“By 2030, AI could represent half of all workloads with inference becoming the primary driver,” according to the 2026 Global Data Center Outlook from JLL. “AI only represented about a quarter of all data center workloads in 2025, with training driving most of the demand. However, a significant shift is anticipated in 2027, when inference workloads could overtake training as the dominant AI requirement.”5

Deloitte expects the shift even sooner, “Inference workloads will indeed be the hot new thing in 2026, accounting for roughly two-thirds of all compute.”6

Cloud vs. Data Center

In this new production phase of AI, enterprises must decide where they are going to host AI workloads and the corresponding data. Moving AI Data at the Speed of Innovation, a report from Riverbed, explains, “AI workloads, especially those involving large language models and real-time analytics, require terabytes to petabytes of data to be moved daily. One of the most significant [costs] is cloud egress fees, which are charged when data is transferred out of cloud environments – between different cloud providers or different regions within the same cloud provider, or to on-premises systems. These fees can be substantial when dealing with petabytes of data. It can easily cost $80,000 in egress charges to move 1 PB of data out of a cloud provider.”7

“When organizations launch AI projects, they often turn to public cloud computing platforms to give them the computing power they need – fast and without requiring a big upfront investment in hardware,” according to Deloitte. “But as AI usage scales, these cloud costs can balloon fast. More than half of the data center leaders we surveyed say they plan to incrementally move AI workloads off the cloud when their data-hosting and computing costs hit a certain threshold.”8

Pilot to Production: The State of AI Adoption in 2025, a report from Prove AI, found that more than two-thirds (67%) of respondents plan to migrate some AI data to non-cloud environments by May 2026. “This trend underscores a move towards hybrid or on-premises solutions, with 66% of respondents agreeing that non-cloud AI deployments offer greater security and 68% agreeing they are more efficient for managing AI datasets and models.”9

However, on-premises data centers are not usually the most efficient candidates to host AI workloads and data either. “The physical requirements of AI are outgrowing most on-prem environments,” explains Juan Font, President and CEO of CoreSite and SVP of American Tower. “High-density GPUs and specialized accelerators demand levels of power and cooling that typical enterprise [on-premises] data rooms just weren’t designed for. You can try to retrofit your own facilities, but you’re looking at major upgrades. And even then, you’re still unlikely to match the scale, flexibility and network performance of a purpose-built data center.”10

Colocation Data Centers Built for AI Workloads

As enterprises scale AI use cases, the decision where to host AI workloads is critical. This is where multi-tenant colocation offers a powerful and cost-effective alternative to the cloud and on-premises data centers.

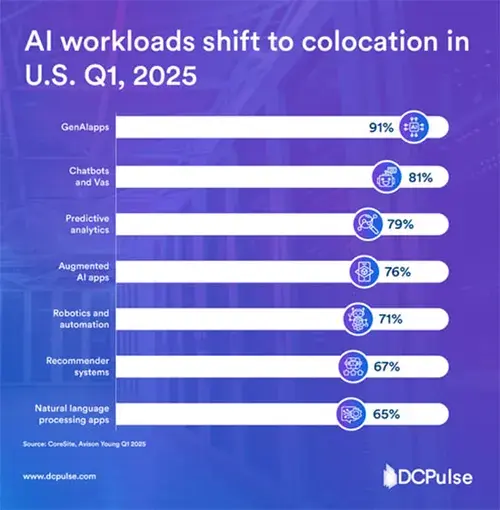

“Businesses are moving AI workloads from traditional cloud environments to colocation facilities. Many early adopters used public cloud services to develop AI and ML, but a recent survey shows that IT leaders and business owners are increasingly choosing colocation because it helps them control costs, scale up and maintain data privacy,” according to a 2025 study from DCPulse, adding that many businesses cannot upgrade their legacy on-premises data centers to handle the power, cooling and rack densities that large AI clusters demand.11

The study found that 91% plan to use GenAI apps in colocation settings, followed by chatbots and virtual assistants at 81% and predictive analytics at 79%. “This trend illustrates how companies are adjusting the balance between cloud-native and colocation models to better handle heavy AI workloads while also improving compliance and operational resilience.”11

CoreSite multi-tenant data centers can provide right-sized, customized infrastructure with scalable capacity to meet the demands of your AI workloads and help you minimize the exorbitant egress fees charged by hyperscalers. Just as important, CoreSite can serve as a hub for your hybrid infrastructure strategy with direct onramps to all the major cloud providers; a fast, reliable and secure connection to your on-premises data center; and an interconnected ecosystem of network carriers and related services.

CoreSite's multiple data center campuses – strategically located in key markets across the U.S. – can also be leveraged to place your inferencing workloads close to the applications, ensuring AI performance with reduced latency. For these reasons, even providers of “neocloud,” a new generation of cloud specially designed to handle AI workloads, host their services at CoreSite facilities.

Is your infrastructure ready for AI workloads? CoreSite data centers offer the low-latency performance, guaranteed bandwidth, robust redundancy and AI-ready power and cooling capabilities necessary for the next phase of AI.

Know More

Visit CoreSite’s Knowledge Base to learn more about the ways in which data centers are meeting constantly increasing power and other infrastructure requirements.

The Knowledge Base includes informative videos, infographics, articles and more, all developed or curated to educate. This digital content hub highlights the pivotal role data centers play in transmitting, processing and storing vast amounts of data across both wireless and wireline networks – acting as the invisible engine that helps keep the modern world running smoothly.

References

1. What is model training?, IBM (source)

2. What Is AI Inference?, Jeffrey Erickson, Content Strategist, Oracle, April 2, 2024 (source)

3. What is AI inference?, Google Cloud (source)

4. The State of AI in the Enterprise, Deloitte, 2026 (source)

5. 2026 Global Data Center Outlook, JLL (source)

6. Why AI’s next phase will likely demand more computational power, not less, Duncan Stewart, Jeroen Kusters, Deb Bhattacharjee, Arpan Tiwari, Girija Krishnamurthy,

Karthik Ramachandran, Deloitte, November 18, 2025 (source)

7. Moving AI Data at the Speed of Innovation, Riverbed, 2026 (source)

8. As cloud costs rise, hybrid solutions are redefining the path to scaling AI, Diana Kearns-Manolatos, Senior Manager, Subject Matter Specialist, Deloitte Center for Integrated Research, Deloitte Services LP, November 5, 2025 (source)

9. From Pilot to Production: The State of AI Adoption in 2025, Prove AI, June 18, 2025 (source)

10. What 2025 Revealed About AI Infrastructure Problems and Requirements for 2026, Juan Font, President and CEO of CoreSite and SVP of American Tower (source)

11. AI workloads shift to colocation in U.S. Q1, 2025, DCPulse, July 17, 2025 (source)