Bring AWS Cloud Services Closer with AWS Outposts and Direct Connect

What if you could leverage many of the capabilities of AWS cloud services locally, without the expense of specialized, dedicated in-house resources? Simplify managing separate architectures, addressing sovereignty and security risks, and eliminate writing code for different stacks? You can – by adding AWS Outposts to your IT stack.

In a recent webinar, Rob Czarnecki, principal product manager for AWS Outposts, and I discussed using AWS Outposts to build and run applications on-premises and in colocation data centers. Additionally, we discussed how to help your company optimize a multi-cloud and hybrid cloud deployment.

In Case You Didn’t Attend the Webinar…

An AWS Outpost is a 42U rack of servers that can scale from one rack to 96 racks to create pools of compute and storage capacity. It uses the same servers that AWS uses in its data centers, and is installed and connected by AWS personnel to the appropriate AWS region and your local networks. It’s a turn-key, managed service. As such, it is an operational expense; one that you can add without capital investment in hardware or personnel. Located in a CoreSite data center, you can connect to AWS Outposts through Direct Connect or a virtual private connection (VPC) across a robust public internet connection.

Are Curious About AWS Outposts Advantages…

AWS Outposts are managed by the same systems as the AWS public cloud, which means that the automated tools developed to monitor the network are used to ensure resiliency, redundancy and security. Developers can use AWS APIs and other AWS services as well – accelerating and simplifying the development process. Ten AWS services, including those applicable to compute and storage, containers, databases and data analytics, run locally on Outposts (a full list is available here). More services will be added as Outposts matures.

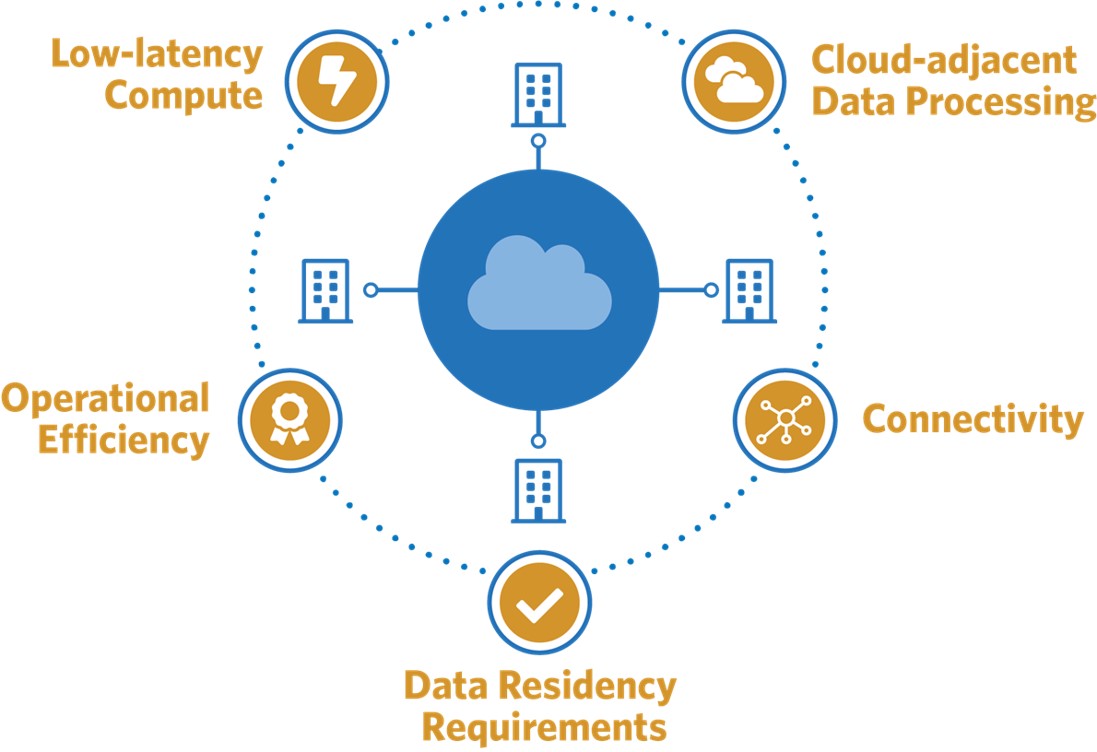

Bringing the power of Outposts to a CoreSite colocation facility ensures consistency, so latency sensitive workloads perform as needed. There’s flexibility as well. Workloads can reside in an Outpost, but burst into the regional availability zone (AZ) it is “homed” to when needed. Furthermore, you can seamlessly extend your Amazon Virtual Private Cloud on-premises and connect to services available in the AWS Region.

Data also can reside where required, so data sovereignty regulations are addressed and companies that possess personally identifiable information (PII) can house that data according to security mandates. Of course, CoreSite provides the physical controls for security and the compliant infrastructure in all of our datacenters across eight domestic markets.

Reducing latency is more critical than ever. Business applications, such as manufacturing execution systems (MES), high-frequency trading, medical diagnostics and gaming platforms require single-digit millisecond latencies to operate effectively. Outposts run workloads where you need them to be, creating performance consistency for applications when public cloud servers are not close enough to meet application latency requirements.

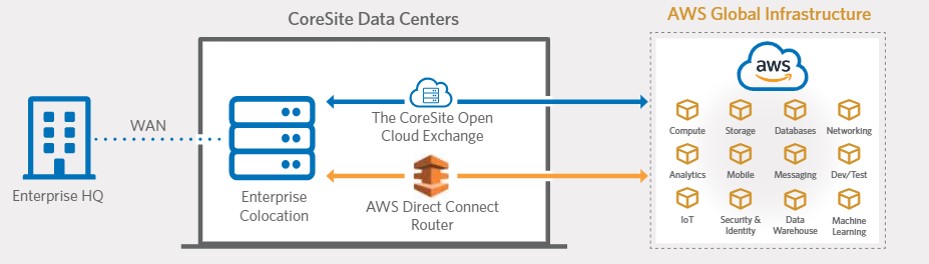

The CoreSite Open Cloud Exchange® (OCX) offers another option for connecting to the AWS global infrastructure. OCX offers virtualized one-to-many direct, secure, virtual connections established and managed through a portal, allowing you to choose cloud connectivity that’s best for your use case and budget.

Other advantages discussed during the webinar? How about:

- Reduce latency by 44% – Geographic proximity to AWS compute nodes in region reduces hops

- Decrease costs by 60% – Data transferred over AWS dedicated connections benefit from reduced data rates

- Lower variability by 60% – Connecting at the edge of the AWS network backbone offers predictable network performance

And Now We Want to Watch the Webinar…

Of course, there’s much more to my discussion with Rob Czarnecki. Plus, we also fielded about 30 minutes of questions and answers with customers who are facing many of the same challenges as you.

That’s why we’ve made the full webcast available on-demand. After you’ve had a chance to see it, please get in touch with any questions and to explore how bringing AWS services closer can simplify hybrid IT while helping control cloud spend.