It’s All About Connections: Wireless/Wireline Framework in a Hyperconnected World

Let’s say you’re working from a laptop in your favorite coffee shop. While you’re working, you’re simultaneously listening to music streaming from Spotify. You also have multiple websites open on your laptop, each of which is hosted on a server located somewhere across the country or the world. To do this, you’re using the coffee shop’s free Wi-Fi, which itself is connected to a broadband internet service provided by that business’s internet service provider (ISP).

This is an example of modern interconnectivity, which we almost take for granted in its ubiquity.

But what will interconnectivity look like in the future? As our business and personal communication processes get more sophisticated and data-driven, interconnectivity will be massively augmented. It will require significantly larger “pipes” connecting different organizations as the amount of data generated, processed and stored grows exponentially over time.

Business leaders know that they must leverage the value of data to an unprecedented level and are working feverishly to integrate and utilize all the data analysis tools at their disposal. The latest manifestation of this technological phenomenon is artificial intelligence (AI), where the totality of data you collect from your users, customers and employee base is processed iteratively to generate decision-making power. That power and the machine’s ability to continue learning are vital to a business’s ability to compete and get ahead.

The truth is simple: If you're not hyperconnected or don't have access to the cloud or to generative AI, you’re at a disadvantage. But those connections don’t happen in a vacuum – you have to be setting the right conditions to access and utilize these different digital platforms, not just for your C-suite, but for all the stakeholders in your digital supply chain.

A Crucial Convergence of Wireless and Wireline Networks

At the practical level, hyperconnectivity depends on wireless and wireline infrastructure convergence. Wireline is what the word implies: Internet access in your building, your home or your office that typically occurs through a fiber optic cable. That fiber is buried underneath the street, is routed through the water utility’s system or via telephone poles and enters through a network connection in your building or home. From there, you connect to a port in your router. Your router then takes that signal and beams it wirelessly through Wi-Fi. But the Wi-Fi is fixed, in the sense that your router stays stationed in one room.

But there is a notion of mobility associated with wireless infrastructure. Think of your cell phone. Access to broadband over your phone or another portable device when you're not in a fixed location – such as when you’re riding a bus – requires tower infrastructure. Wireline and wireless convergence, in essence, means being able to leverage very high bandwidth applications while on the move.

Twenty years ago, it was a stretch to stream videos over a home computer. Now, you can do that from almost anywhere, on your phone. And in the next few years, you will be able to leverage the increasingly sophisticated and intricate capabilities available when mobile will require wireline and wireless infrastructure to be joined at the hip.

Technology Expanding to Meet Its Own Demands

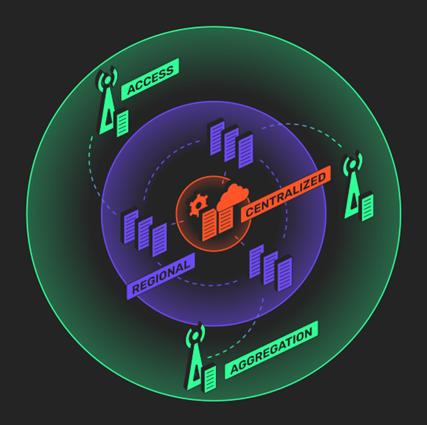

If you think about the cloud or artificial intelligence as a subset of that, you realize it all works because today the internet is ubiquitous. However, because the demands of workloads are proliferating and new models require more and more capacity, infrastructure will have to be developed to accommodate the almost infinite computing resources at your fingertips. And for that to become a reality, we’re going to have to displace some of the workloads that currently are highly centralized in a much more distributed fashion.

That's why we posit that wireline and wireless convergence is the prerequisite for the next generation of highly intensive data services - services that, to be leveraged at full capacity, also need to be delivered with low latency. And the next generation of applications will require 10 to 100 times more bandwidth.

The Importance of Reducing “Time to Cloud”

In one sense, time to cloud is defined as the time it takes for data to reach its destination in the cloud for either processing or storage. Reducing that time or reducing “latency” (delays due to physical distance, network congestion, quality of networks, etc.), is a paramount concern for businesses operating today, which need to be able to transmit more significant amounts of data to further flung locations at ever higher speeds in order to work in an increasingly globalized environment.

In another sense, time to cloud is the length of time that passes from the moment your organization decides to connect to the cloud to the moment that the connection is made. How long it takes depends on the providers involved in establishing that interconnection.

We’re starting to see alliances forming between some mobile network operators (MNOs) like Verizon with companies like Microsoft or telecom giants such as AT&T with AWS. So, the convergence of cloud providers and mobile providers will precisely enable those necessary reductions in time to cloud that will allow businesses to keep pace in this hyperconnected environment.

But we're just at the cusp of that. The utility that we call the cloud is still highly concentrated in very few regions. To serve enterprise the way the cloud needs to, it will have to become massively more distributed. Enabling that convergence is a process that will likely take more than a decade. Right now, we have a vision of what computing needs to be in the future – but we're only at the beginning of the journey.

Note: This article was previously published by the Forbes Technology Council and can be found here.