Hot or Not? How Data Center Thermal Standards Impact Energy Use and Costs

It’s no secret that energy consumed by data centers will mushroom as the demand for digital services and higher computing power continues to grow. Every activity on the internet passes through a vast network of data centers that collectively house billions of gigabytes of information. It takes enormous amounts of energy to store and process all this information which, in turn, generates a lot of heat.

Just One Degree Makes a Difference

Today, data centers are getting denser and hotter, driven in part by increasingly power-dense racks of servers. Higher density computing simply requires more cooling, and with cooling accounting for up to 40% of the total energy used within a data center, any efficiencies gained could make a tremendous difference.1 That’s where temperature control comes into play.

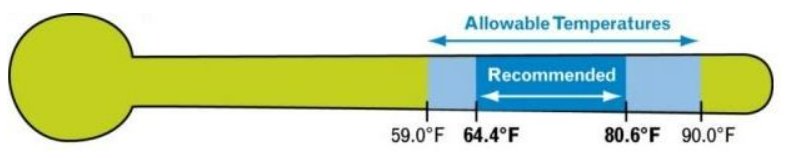

The industry relies on guidelines set by the American Society of Heating, Refrigerating and Air-Conditioning Engineers (ASHRAE) for thermal standards within data centers. In the past, you may have wanted to wear a jacket when going into a data center, with the average temperature kept at just 68 degrees Fahrenheit. Some even operated at a cold 55 degrees. “The colder the better,” it was said. The rationale was to tightly control the environment to minimize risk to IT equipment during a power quality event. Efficiency was not given much consideration at the time.

A lot has changed since the original guidelines were put in place, as it was discovered that IT equipment could, in fact, operate reliability at higher temperatures. Today, ASHRAE’s recommended temperature range is 64.4°F to 80.6°F at the inlet of the server.

With cooling gobbling up a large amount of energy by operating every hour, every day to generate an optimal computing environment, any practice that reduces that demand is advantageous. Just raising the temperature by one degree can result in reduced cooling costs and big energy savings, without sacrificing uptime. 2

How much savings can we bring by being more efficient? Let’s say we raised chilled water and server inlet temperature by 1°F, and by doing so we were able to reduce power usage on the facility load by 200 kilowatts (kws). Costs per kWh vary across the country, but one kWh could be $0.20. The math says: 200 kw x $0.20 kwh x 24 hours x 365 days = $350,000 in energy savings!

It’s Not as Simple as Adjusting the Thermostat

Before energy wasn’t a major expense or a concern, most data center providers were operating at the norm; an excessively cool data center meant that heat-related downtime was nearly non-existent. However, larger power bills and sustainability initiatives have changed all that.

Today, data centers are running hotter – a lot hotter – than ever before. In its Belgium data center, which is cooled only by outside air, (some data centers pull in outside air, a technique called free cooling), Google will let the temperature rise so high that technicians can only work in the space for limited amounts of time. Data centers are no longer about comfort cooling – it’s all about the equipment and responsible energy management.

Increasing the temperature, however, does not come without consequences. Heat can jeopardize the health of IT equipment; if not installed properly and monitored, it can lead to short cycling and improper airflow issues. Raising the temperature could have catastrophic impacts to the operation or business continuity. Understanding the installed equipment and room dynamics are key before raising chilled water or supply air temperatures.

Heat can jeopardize the health of IT equipment; if not installed properly and monitored, it can lead to short cycling and improper airflow issues. The resulting increase in airflow requirements may offset efficiency gains. If the data center houses legacy IT equipment and cooling systems, the raise in temperature could render any efforts moot.

One thing is for certain: Ecosystems within data centers are complex and it takes careful planning to optimize the temperature to ensure efficiency gains are beneficial.

Strategic Practices to Optimize Temperature

Temperature guidance from ASHRAE opened opportunities for efficient cooling methods to reduce loads. Data centers that optimize thermal practices are gaining traction by utilizing proven technologies and new techniques to reduce the power consumed by cooling systems. In addition to raising the temperature, efficient data center cooling design includes:

-

Liquid cooling, which leverages more effective heat transfer from the computer to water and can support higher densities.

-

Free cooling technology, leveraging cooler outside air introduced into the computer room or utilized to cool chilled water feeding the space. This offloads work done by mechanical systems.

-

Hot and cold aisles – allows for more targeted airflow dynamics, moving air to specific places need while eliminating waste.

-

Air-side optimization by placing perforated tiles in the precise positions needed to cool live racks and blanking off unneeded tiles to eliminate waste.

Monitoring temperature and humidity is also key to understanding and optimizing temperature within the data center and inside server rooms. Tools like MyCoreSite allow customers to see metrics including temperature, humidity and power usage in near real-time and historically. This visibility enables customers and providers to track progress when it comes to sustainability goals.

Continuing this Sustainable Journey

Naturally, power consumption will grow along with the rising demand for digital services. Even with all the innovations for more efficient cooling systems and practices, data centers will be pushed to their limits. However, when the temperature is increased by just one degree, data center providers and customers alike will see significant results, not just in savings but also in carbon footprint. As hardware technology improves in resiliency, we could see temperatures increase even more in data center spaces. The days of needing a hooded sweatshirt in our data centers are long gone! It is warm in here, and that is a great thing.

Download the CoreSite Sustainability Report to learn more about our commitment to sustainability, and contact us to learn how CoreSite’s facilities operate at optimal temperatures to help you save on cooling costs and reduce your carbon footprint.